A penny saved is a penny earned. Benjamin Franklin's quote is apropos for the importance of saving money, but if you are saving money for retirement, you hope that you can get a better rate of return than that. Considering the number of people on Social Security, this is all the more relevant. For the millions on Social Security, many rely on it as a source of retirement. If Social Security does not provide a proper rate of return, then the livelihood of those who have retired is greatly diminished. Enter the replacement rate.

The replacement rate is the percentage of a worker's pre-retirement income that is paid out by a pension program for retirement. The ratio is commonly used to measure how adequate pension benefits can be. Before delving into details, let's keep in mind that financial advisors generally agree that a 70 percent replacement rate is adequate in order to maintain one's pre-retirement standard of living.

The replacement rate has been somewhat of a debate in years past because the rate can end up differently depending on how it is measured (Pang and Schieber, 2014). Financial advisors typically compare retirement income to the earnings in the year prior to retirement. The problem with this method is that income in the last year can be quite variable, and does not necessarily reflect one's standard of living. The Social Security Administration (SSA) has a different method. The SSA divides retirement income by average career earnings. This sounds reasonable until you reach the part where the SSA uses wage indexing to credit an individual with pre-retirement earnings that never existed since it overstates pre-retirement earnings and understates their retirement benefits relative to those earnings. The problem with the SSA's indexing is that it does not reflect the reality of financial planning to the point where the replacement rate seems inadequate to some, especially to those who are seeking to expand Social Security because they think Social Security benefits are too modest.

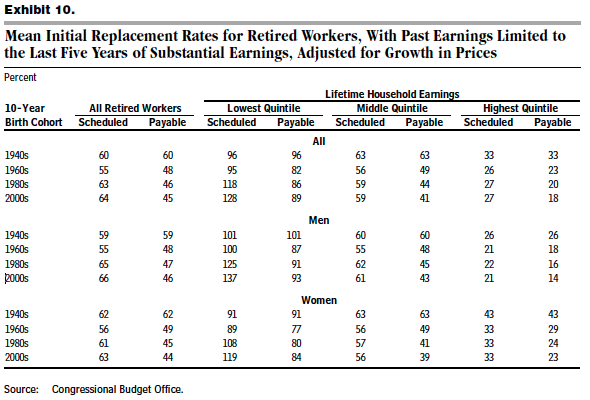

There are better ways to find a replacement rate than what the SSA uses (Biggs et al., 2015). The Congressional Budget Office (CBO) recently released some long-term projections on Social Security. Among those projections are mean initial replacement rates for retired workers. The CBO actually took one of the suggestions from the bipartisan Technical Panel on Assumptions and Methods and actually used the last five years of substantial earnings (i.e., equal to at least half of the individual's career-long average) as a basis for pre-retirement income, and decided to focus on those with full-working careers (as opposed to short careers). The results of some of those demographics are in the Exhibit below.

Before proceeding, I do want to point out the difference between scheduled and payable benefits. Scheduled is the amount that the SSA appropriates in spite of any trust fund balances. Payable benefits are what one is going to receive after the trust fund is exhausted by 2033. For those who are looking to retire in 17 years or more, I would look at the payable rates since those will be more realistic for you.

One finding which is interesting is that in terms of replacement rates, Social Security does more for the poorest than it does the middle and upper classes, which makes sense given the progressive nature of the Social Security benefits formula. However, it does mean that it's not necessarily a good deal for those who are not in the lowest quintile. Another demographic to look at is at age via the birth cohorts. These data once again that older generations get a better payout while younger generations have to shoulder a higher tax burden in order to receive a lower replacement rate.

Now that we have replacement rates that reflect financial reality more adequately than the SSA, what are the implications of these rates? First is that the notion of a "retirement crisis" in America is overblown. Considering that one only needs about 70 percent of pre-retirement income in order to maintain pre-retirement living standards, the figures are not nearly as bleak as one might think. Such figures deliver a coup to those who argue for expanding Social Security because the replacement rates do not justify such an expansion.

These findings do come with a flip side, which is that Social Security is not a complete disaster. We should still be worried that the trust fund is going to be exhausted in 2033. Even more to the point, these findings do not negate the fact that the government shouldn't take 12.4 percent of my earnings for Social Security because in spite of these replacement rates, there are still better retirement investment plans than Social Security. Investing in something as low-risk as Treasury Bonds would increase the replacement rate by about 41 percent, which says nothing about the rate of return on something such as investing in the stock market of AAA corporate bonds. Imagine if you could retire in a way that is more than merely adequate. I would be happy to see full privatization of retirement savings so that Americans can enjoy retirement to the fullest. In the interim, there are policy alternatives to reform the benefit formula (Blahous, 2015). Although it would nice to see Social Security reform that would be headed in the direction of privatization, if there is one takeaway here for me, it is that these latest data do not justify the call for more Social Security benefits or higher payroll tax rates.

2-17-2016 Addendum: It looks like the CBO had to make some revisions from its original analysis. Although these downward revisions (see below) bring the revised replacement rates below what financial advisors would recommend, Social Security still covers a good chunk of the required replacement rate. While these numbers strengthen the argument for a "retirement crisis," they also strengthen the argument for private retirement accounts.

The political and religious musings of a Right-leaning, libertarian, formerly Orthodox Jew who emphasizes rationalism, pragmatism, common sense, and free, open-minded thought.

Tuesday, December 29, 2015

Thursday, December 24, 2015

Should There Be a Tax on Unhealthy Foods and Drinks?

I'm not about to have Christmas dinner, but I do know that Christmas dinners, and certainly those in an American context, can be some of the largest and elegant family meals out there. Christmas meals can vary based on family tradition and/or country, but there is typically some sort of meat dish, such as a Christmas ham, roast, or gamey bird. There is also some sweet dessert, such as Christmas pudding, pie, or cookies. Mashed potatoes or dinner rolls spread with butter also make it to the Christmas table. For many, it sounds delectable. For others, the overconsumption of excessively fatty and sugary foods could be viewed as a manifestation of the obesity problem in this country. What would happen if being able to have such a Christmas meal were a larger financial burden? What would happen if that were not just for Christmas, but people couldn't eat fatty or sugary foods year-round? What would happen if the culprit were not a downright ban on foods, but rather due to a food tax that was high enough to cause a health nut's dream to come true?

These were the sort of questions I was asking myself as I was reading a recent research report from the Left-leaning Urban Institute entitled Should we tax unhealthy foods and drinks? The report recommends a tax on unhealthy foods to deal with the economic and social costs surrounding obesity since a moderate soda tax could, according to their model, reduce obesity 1 to 4 percentage points (Marron et al., 2015, p. 2). As the Urban Institute report points out, obesity costs the U.S. healthcare system up to $300 billion per annum (ibid., p. 5). The Brookings Institution illustrated the economic costs of obesity a few years back. Could this type of tax help deal with our obesity issues?

I'm not thrilled prima facie about more taxes not only because I think tax rates are already too high, but also because much like a cigarette tax, this comes off more as a sin tax than it does a Pigovian tax. Increased government intervention in the healthcare industry, like we have witnessed with Obamacare, increases the extent to which health issues such as obesity become socialized costs. Nevertheless, there is a huge element punishing the "sinner" with higher tax rates. Even so, such taxes are relatively less objectionable than a downright ban on certain foods. A food tax or fat tax would act as a consumption tax, and on the bright side, consumption taxes are indirect taxes. Indirect taxation notwithstanding, I do have to wonder about effectiveness.

The Urban Institute lists places that have already implemented such taxes, emphasizing that the structure of the tax can play an important role in the tax's success of failure. For instance, Denmark instituted a 16DKK ($2.70USD) tax per kilogram on saturated fats in October 2011. This tax exemplifies the high tax rates for which Denmark is well-known. It is also noteworthy to state that only took about a year before Denmark, the first country to enact a fat tax, repealed it. Why? For one, it wasn't lowering fat consumption. Danes were simply heading over to Germany to stock up on fattier foods. It was also a bureaucratic nightmare for Danish food producers and distributors. It also attributed to inflation in Denmark. Overall, the effects of such a tax were decidedly negative for Denmark (Snowdon, 2013). Perhaps people have learned from the Danish debacle. Other countries that have implemented such taxes are Hungary, France, and Mexico. At least for Mexico, the tax does not seem to have accomplished anything significant. Conversely, some laud the Hungarian food tax as a success.

If one were to advocate for such a tax like Urban Institute does, then it depends on what is taxed, at what rate it is taxed, and the consequences of said tax (e.g., consumer behavior, product substitution, improved healthcare). In the Urban Institute report, the author points out that the type of food affects sensitivity to prices, what is known in economic jargon as price elasticity of demand. Soda has an elasticity of 0.9, which is higher than the elasticity [of 0.5] of other foods (e.g., fast food, produce). This elasticity could help explain why soda taxes are more popular than other food taxes, and why most of the countries that have implemented such taxes go after such sugary products.

Aside from collecting government revenue, the main function of a tax is to disincentivize behavior, which is probably why the Urban Institute recommends a food tax. The Urban Institute is astute enough to realize that taxes are most effective when there is a tight relationship between that which is being taxed and the negative externality. For instance, we know that cigarettes are the primary cause of smoking, and thus there is strong linkage between a cigarette tax and cigarettes. Dealing with obesity is not that simple. Obesity has multiple causes. Additionally, people have different reactions to food intakes and the effects of obesity, and going after fat or sugar with such a broad stroke is counterproductive and hubristic. Perhaps this is a reason why the Urban Institute recommends going after sugar dosages instead of a straight-up tax on sugary goods (Marron et al., p. 14). Furthermore, much like the Cato Institute specifies, there is another unintended consequence of a food tax or soda tax: regressivity. A food tax or soda tax wold hit the poor much more than the rich because the poor would pay a higher percentage of their income to food. A food tax would be less popular than a sin tax on cigarettes. Why? Not everyone smokes, but everyone eats food. It would hit lower-income families harder, which is certainly a drawback to such a tax (Marron et al., p. 2). Since people need to eat to live, the substitution effect is all the more serious of an issue than it would be with cigarettes (Fletcher et al., 2013).

The question of whether poor dietary decisions are a negative externality notwithstanding, we have to ask ourselves what to do with a lack of evidence that such taxation works, especially in light of its unfairness and inability to counter the substitution effect. A 2014 article from the Journal of Public Health (Cornelsen et al, 2014) summarizes it well by pointing out that the taxes might reduce certain consumption by a small amount, but fails to take in account food substitutions. As even the Urban Institute admits, there is a lack of data in determining the more indirect effects that could undermine the overall success of such taxes (Marron et al., p. 3). Food taxes, fat taxes, and soda taxes are not going away anytime soon. While we wait on the data regarding the overall effectiveness on the taxes, might I suggest removing subsidies for sugar, national school lunches, and agricultural protectionism via the Farm Bill that allows such ingredients as fructose corn syrup to flourish? We can correct the government policies promoting obesity, thereby making the American government less hypocritical if/when it attempts to lower obesity rates with these consumption taxes. As the Urban Institute study showed, obesity rates could only experience a reduction 1 to 4 percentage points at best, which would only moderately decrease what is currently a 34.9 percent obesity rate in this country. Even a British Medical Journal study showed that for fat taxes to even begin having effect, the taxes would need to be 20 percent, which is hardly an insignificant amount. We cannot count on some overbearing government regulation to do the work. We need to encourage others around us to lose weight, and more importantly, we need to take responsibility for our own health if we don't want obesity to be a cost to the economy, society, or ourselves.

These were the sort of questions I was asking myself as I was reading a recent research report from the Left-leaning Urban Institute entitled Should we tax unhealthy foods and drinks? The report recommends a tax on unhealthy foods to deal with the economic and social costs surrounding obesity since a moderate soda tax could, according to their model, reduce obesity 1 to 4 percentage points (Marron et al., 2015, p. 2). As the Urban Institute report points out, obesity costs the U.S. healthcare system up to $300 billion per annum (ibid., p. 5). The Brookings Institution illustrated the economic costs of obesity a few years back. Could this type of tax help deal with our obesity issues?

I'm not thrilled prima facie about more taxes not only because I think tax rates are already too high, but also because much like a cigarette tax, this comes off more as a sin tax than it does a Pigovian tax. Increased government intervention in the healthcare industry, like we have witnessed with Obamacare, increases the extent to which health issues such as obesity become socialized costs. Nevertheless, there is a huge element punishing the "sinner" with higher tax rates. Even so, such taxes are relatively less objectionable than a downright ban on certain foods. A food tax or fat tax would act as a consumption tax, and on the bright side, consumption taxes are indirect taxes. Indirect taxation notwithstanding, I do have to wonder about effectiveness.

The Urban Institute lists places that have already implemented such taxes, emphasizing that the structure of the tax can play an important role in the tax's success of failure. For instance, Denmark instituted a 16DKK ($2.70USD) tax per kilogram on saturated fats in October 2011. This tax exemplifies the high tax rates for which Denmark is well-known. It is also noteworthy to state that only took about a year before Denmark, the first country to enact a fat tax, repealed it. Why? For one, it wasn't lowering fat consumption. Danes were simply heading over to Germany to stock up on fattier foods. It was also a bureaucratic nightmare for Danish food producers and distributors. It also attributed to inflation in Denmark. Overall, the effects of such a tax were decidedly negative for Denmark (Snowdon, 2013). Perhaps people have learned from the Danish debacle. Other countries that have implemented such taxes are Hungary, France, and Mexico. At least for Mexico, the tax does not seem to have accomplished anything significant. Conversely, some laud the Hungarian food tax as a success.

If one were to advocate for such a tax like Urban Institute does, then it depends on what is taxed, at what rate it is taxed, and the consequences of said tax (e.g., consumer behavior, product substitution, improved healthcare). In the Urban Institute report, the author points out that the type of food affects sensitivity to prices, what is known in economic jargon as price elasticity of demand. Soda has an elasticity of 0.9, which is higher than the elasticity [of 0.5] of other foods (e.g., fast food, produce). This elasticity could help explain why soda taxes are more popular than other food taxes, and why most of the countries that have implemented such taxes go after such sugary products.

Aside from collecting government revenue, the main function of a tax is to disincentivize behavior, which is probably why the Urban Institute recommends a food tax. The Urban Institute is astute enough to realize that taxes are most effective when there is a tight relationship between that which is being taxed and the negative externality. For instance, we know that cigarettes are the primary cause of smoking, and thus there is strong linkage between a cigarette tax and cigarettes. Dealing with obesity is not that simple. Obesity has multiple causes. Additionally, people have different reactions to food intakes and the effects of obesity, and going after fat or sugar with such a broad stroke is counterproductive and hubristic. Perhaps this is a reason why the Urban Institute recommends going after sugar dosages instead of a straight-up tax on sugary goods (Marron et al., p. 14). Furthermore, much like the Cato Institute specifies, there is another unintended consequence of a food tax or soda tax: regressivity. A food tax or soda tax wold hit the poor much more than the rich because the poor would pay a higher percentage of their income to food. A food tax would be less popular than a sin tax on cigarettes. Why? Not everyone smokes, but everyone eats food. It would hit lower-income families harder, which is certainly a drawback to such a tax (Marron et al., p. 2). Since people need to eat to live, the substitution effect is all the more serious of an issue than it would be with cigarettes (Fletcher et al., 2013).

The question of whether poor dietary decisions are a negative externality notwithstanding, we have to ask ourselves what to do with a lack of evidence that such taxation works, especially in light of its unfairness and inability to counter the substitution effect. A 2014 article from the Journal of Public Health (Cornelsen et al, 2014) summarizes it well by pointing out that the taxes might reduce certain consumption by a small amount, but fails to take in account food substitutions. As even the Urban Institute admits, there is a lack of data in determining the more indirect effects that could undermine the overall success of such taxes (Marron et al., p. 3). Food taxes, fat taxes, and soda taxes are not going away anytime soon. While we wait on the data regarding the overall effectiveness on the taxes, might I suggest removing subsidies for sugar, national school lunches, and agricultural protectionism via the Farm Bill that allows such ingredients as fructose corn syrup to flourish? We can correct the government policies promoting obesity, thereby making the American government less hypocritical if/when it attempts to lower obesity rates with these consumption taxes. As the Urban Institute study showed, obesity rates could only experience a reduction 1 to 4 percentage points at best, which would only moderately decrease what is currently a 34.9 percent obesity rate in this country. Even a British Medical Journal study showed that for fat taxes to even begin having effect, the taxes would need to be 20 percent, which is hardly an insignificant amount. We cannot count on some overbearing government regulation to do the work. We need to encourage others around us to lose weight, and more importantly, we need to take responsibility for our own health if we don't want obesity to be a cost to the economy, society, or ourselves.

Thursday, December 17, 2015

Parsha Vayigash: Why We Don't Embarrass or Criticize People Publicly

Sometimes, I am amazed at the world of communication that social media platforms such as Facebook have opened up for people. I am also perturbed at how such communication has devolved, especially when I see Facebook "discussions" on politics or religion. The line between criticizing ideas to criticizing people has been all the more blurred, and people will hurl insults at people simply due to disagreement. It is all too easy to forget there is another human being on the other end of the conversation. Enough people feel justified in this sort of public lambasting by saying "I was offended", "they shouldn't have posted if they were going to be that stupid in the first place," or "I was just telling it to them like it is." While this behavior does not explain every single person's interactions on social media, it has sadly become a trend where we desensitize ourselves to the other because that level of self-gratification or need to stroke one's ego has become of such great importance. For so many people, such platforms become a carte blanche to embarrass people simply because it's expedient.

Contrast that with Jewish ethics, and what we see in this week's Torah portion. We're at the point in the Joseph story where Judah begs for Benjamin's life. Joseph's brothers did not want to make the same mistake that they did with Joseph. Judah argues that the news of Benjamin's imprisonment would be ever so deleterious to Jacob's health. After this explanation, the text says that he could not refrain himself anymore. He cleared the room so it was just Joseph and his brothers (Genesis 45:1). It was at that moment that Joseph revealed his true identity (ibid. 45:3), and the family reunion thus took place. The question I have here is why Joseph felt the need to clear his Egyptian entourage out of the room. What exactly was Joseph doing by making the rest of the conversation between his brothers a private one?

For one, this was a personal matter. Joseph couldn't hold back anymore (45:1). He realized that his brothers had gone through the teshuvah process and repented, and thus wanted the moment to be an intimate, family moment (JPS edition of Tanach). There was more than a sense of intimacy and privacy for its own sake to consider. According to Rashi, Joseph cleared out the room because he could not bear having his brothers shamed in front of bystanders when it was announced that the brothers sold Joseph into slavery. The Ramban adds to the reasoning. The Ramban says that letting the Egyptians know what the brothers did to Joseph would have put the brothers in danger. After all, if this is how they treated Joseph, who is now the right-hand man of the most powerful ruler of the era, how would they treat the rest of them? They could have been denied access to the country or even worse!

What can we learn from this rabbinic commentary? Joseph was clearly in a state of emotional disarray. Joseph could no longer put up the façade (45:1), and his cries were so loud that they were of universal concern to the Egyptians (R. Hirsch's commentary on 45:3). Even in his emotional state, Joseph was able to keep a clear enough head to save his brothers from embarrassment. He acted in such a way that didn't compound upon the guilt that his brothers already felt for what they did to Joseph in days past.

Joseph was not just being a tactful statesman. He was outlining how we should conduct ourselves with our interpersonal relations, all the more so when we're under emotional duress. Given what his brothers put him through, Joseph arguably had every right to emotionally torment them, throw them in prison, or even execute his brothers. Instead, the way in which Joseph identified himself showed that he had enough foresight to realize that his words would have important consequences if uttered publicly. All too often, people misunderstand situations because they don't have enough context to understand what is going on. Author Stefan Emunds once said that 90 percent of arguments are caused by misunderstandings, and 9.9 percent by conflicts of interest. Joseph had the wisdom to realize that his words could easily cause misunderstanding, and thus kept the conversation private. He was also emotionally aware at that moment that his brothers were created in His Image, and should be treated as such. If rebuked publicly, the consequences for Joseph's brothers would have been dire. The Talmud (Baba Metzia 59a; Ketubot 67b) teaches that it's [metaphorically] better to throw oneself into a furnace than to embarrass another in public. Joseph shows us not simply what to utter, but how, where, and when to utter what needs to be said. May we learn from Joseph's example and improve upon our speech ethics along the way.

Contrast that with Jewish ethics, and what we see in this week's Torah portion. We're at the point in the Joseph story where Judah begs for Benjamin's life. Joseph's brothers did not want to make the same mistake that they did with Joseph. Judah argues that the news of Benjamin's imprisonment would be ever so deleterious to Jacob's health. After this explanation, the text says that he could not refrain himself anymore. He cleared the room so it was just Joseph and his brothers (Genesis 45:1). It was at that moment that Joseph revealed his true identity (ibid. 45:3), and the family reunion thus took place. The question I have here is why Joseph felt the need to clear his Egyptian entourage out of the room. What exactly was Joseph doing by making the rest of the conversation between his brothers a private one?

For one, this was a personal matter. Joseph couldn't hold back anymore (45:1). He realized that his brothers had gone through the teshuvah process and repented, and thus wanted the moment to be an intimate, family moment (JPS edition of Tanach). There was more than a sense of intimacy and privacy for its own sake to consider. According to Rashi, Joseph cleared out the room because he could not bear having his brothers shamed in front of bystanders when it was announced that the brothers sold Joseph into slavery. The Ramban adds to the reasoning. The Ramban says that letting the Egyptians know what the brothers did to Joseph would have put the brothers in danger. After all, if this is how they treated Joseph, who is now the right-hand man of the most powerful ruler of the era, how would they treat the rest of them? They could have been denied access to the country or even worse!

What can we learn from this rabbinic commentary? Joseph was clearly in a state of emotional disarray. Joseph could no longer put up the façade (45:1), and his cries were so loud that they were of universal concern to the Egyptians (R. Hirsch's commentary on 45:3). Even in his emotional state, Joseph was able to keep a clear enough head to save his brothers from embarrassment. He acted in such a way that didn't compound upon the guilt that his brothers already felt for what they did to Joseph in days past.

Joseph was not just being a tactful statesman. He was outlining how we should conduct ourselves with our interpersonal relations, all the more so when we're under emotional duress. Given what his brothers put him through, Joseph arguably had every right to emotionally torment them, throw them in prison, or even execute his brothers. Instead, the way in which Joseph identified himself showed that he had enough foresight to realize that his words would have important consequences if uttered publicly. All too often, people misunderstand situations because they don't have enough context to understand what is going on. Author Stefan Emunds once said that 90 percent of arguments are caused by misunderstandings, and 9.9 percent by conflicts of interest. Joseph had the wisdom to realize that his words could easily cause misunderstanding, and thus kept the conversation private. He was also emotionally aware at that moment that his brothers were created in His Image, and should be treated as such. If rebuked publicly, the consequences for Joseph's brothers would have been dire. The Talmud (Baba Metzia 59a; Ketubot 67b) teaches that it's [metaphorically] better to throw oneself into a furnace than to embarrass another in public. Joseph shows us not simply what to utter, but how, where, and when to utter what needs to be said. May we learn from Joseph's example and improve upon our speech ethics along the way.

Monday, December 14, 2015

Does Affirmative Action for College Admissions Cause a Mismatch Effect?

Last week, the Supreme Court allowed Abigail Fisher to reargue her case in Fisher v. University of Texas. To recap the case, plaintiff Abigail Fisher was denied entry to the University of Texas. She filed suit by saying that the University discriminated against her on the basis of race, and thus violated the Constitution. The case made it all the way up to the Supreme Court in 2012. In 2013, the Supreme Court ruled that the Fifth Circuit Court failed to apply strict scrutiny, which sent the case back to the Fifth Circuit Court. The case worked its way up to the Supreme Court once more, and here we are. During the oral arguments for the case this past week, Justice Antonin Scalia made the controversial statement that "there are those who contend that it does not benefit African-Americans to get them into the University of Texas where they do not do well, as opposed to have them go to a less-advanced school, a slower-track school where they do well." Top Democrats didn't waste much time in calling Scalia a racist. Whatever his intentions were, Scalia has reignited the affirmative action debate in this country once more, specifically in context with the mismatch effect.

The theory behind the mismatch effect in a nutshell: a school offers a considerably large preference to a student due to race in the hopes that the minority student will succeed with the presented opportunity to attend a more elite university. The mismatch takes place because the accepted student is not prepared for the academic rigor of a more select university, and goes through unnecessary, preventable inconvenience and suffering (e.g., poor grades, changing majors, dropping out, transferring to another college) that would have most probably not have taken place had the student attended an institution of higher learning that better reflected the student's academic abilities. This theory can also be applied to "legacy students" and students on athletic scholarships, but in this context, it is applied to students of certain races since students of those races are provided with the preferential treatment.

This has always been a contentious theory. Given the recent events in Baltimore and Ferguson, the rise of the Black Lives Matter movement, and increased racial tensions in this country, such a theory is all the more heated. Interracial relations notwithstanding, it's understandable why this would be a hot-button issue. If the mismatch theory is indeed a reality, it undermines the primary argument for affirmative action in college admissions, which is that affirmative action helps disadvantaged minority groups. Politically speaking, there is a lot riding on this theory, which is why we should have cooler heads prevail in asking the question of whether the mismatch effect is mere theory or reality.

The main proponent of the mismatch effect, UCLA Professor Richard Sander, has been arguing at least since 2004 that affirmative action policies for college admissions have indeed caused this mismatch effect, most notably in his book Mismatch. Unsurprisingly, this has caused much criticism, to which Sander has replied. It wasn't just the initial criticism shortly after the release of Sander's paper. Matthew Chingos of the Urban Institute argued last Thursday that the mismatch theory doesn't withstand scrutiny, although he made that argument back in 2013 and 2009. A couple of economists found that in the field of law, removing racial preferences would actually reduce the number of African-Americans that pursue law degrees at all (Rothstein and Yoon, 2008). Other economists have followed suit in criticizing the mismatch theory (e.g., Kidder and Lempert, 2014; Kurlaender and Grodsky, 2013; Dale and Kruger, 2011; Ho, 2007).

It should also be no surprise that there are other economists aside from Sander who find evidence in favor of the mismatch theory, which is particularly true for those who pursue STEM degrees (Arcidiacono et al., 2013) and law students (Williams, 2013). Along with two other colleagues, an MIT economist found that students learn more than they do in academically heterogeneous classes (Duflo et al., 2010). Back in August, the Heritage Foundation flushed out the details of empirical evidence in a policy report that shows a mismatch effect.

The contention between affirmative action scholars and empiricists does not seem to between the outcome per se (see Brief of Empirical Scholars from 2012 case; also read Sander's 2012 amicus brief), but rather that affirmative action is a causative agent. Let's set aside for a moment that one issues here is that we could use better data in measuring the mismatch effect (Arcidiacono and Lovenheim, 2015, p. 28).

I don't like categorizing individuals into such broad racial categories. However, since society is so gung-ho to do so, let's go through some statistics. Since 1976, we have seen the percent of students enrolled for college increasingly shift to have more African-Americans and Hispanic Americans. Based on Department of Education data, African-Americans went from representing 9.6 percent of enrollees in 1976 to 14.9 percent in 2012, which is pretty good considering that African-Americans represent 13.2 percent of the population. Even in spite of an enrollment rate that is slightly higher than demographic representation in the general American population, African-Americans have a lower completion rate than Caucasian Americans by approximately 20 percent. For those select minority demographics that still make it, there is still a racial GPA gap (Arcidiacono et al., 2012).

I just outlined some unfortunate realities that point to racial gaps, particularly in terms of GPA and completion rates. Although opponents of mismatch theory like to criticize the theory, one thing I couldn't find was an alternative theory that would be more plausible. While it might be true that some minority students thrive by being around students with better academic preparedness, the aggregate data show that it's not the case for African-American students attending a four-year college. If African-American and Hispanic students are statistically more likely to receive a less-than-stellar K-12 education, it is certainly within the realm of plausibility, not to mention probability, that they would have greater difficulties handling a strenuous academic workload since the quality of K-12 education received to help them prepare for post-secondary education is in, many cases, inadequate.

There is a certain disquietude to the fact that diversity based on skin color has garnered such emphasis in college admissions, which is something I brought up when reflecting on the initial Fisher v. University of Texas case back in 2012. Balkanization and separation of people based on the color of their skin in an increasingly multicultural and ethnically diverse country only divides this country further. While the economists fight out the particulars of the data and evidence, I have to wonder about alternatives that can be pursued to mitigate certain related issues.

A few alternatives can be to improve recruitment efforts for talented lower-income students, create summer programming for these students to catch up academically, create additional tutoring opportunities for these students, decrease legacy admissions or student athletic scholarships so that these lower-income students can be admitted, or create some transparency on student data so one can compare how they match up to others' credentials. However, these alternatives are stopgaps because they don't go deep enough into the issue. If the issue is indeed lack of preparedness, then the focus should be on K-12 education so these students can handle college. Or better yet, we can make K-12 education comprehensive and informative where they can develop enough to the point where they don't need to go to college, a pursuit that can hardly be considered to have a 100 percent success rate. While I am curious as to see what education reforms are passed to help minority students, and indeed all disadvantaged students, a policy that agitates racial relations and has questionable outcomes is not something I would continue to do with an attitude of "business as usual."

The theory behind the mismatch effect in a nutshell: a school offers a considerably large preference to a student due to race in the hopes that the minority student will succeed with the presented opportunity to attend a more elite university. The mismatch takes place because the accepted student is not prepared for the academic rigor of a more select university, and goes through unnecessary, preventable inconvenience and suffering (e.g., poor grades, changing majors, dropping out, transferring to another college) that would have most probably not have taken place had the student attended an institution of higher learning that better reflected the student's academic abilities. This theory can also be applied to "legacy students" and students on athletic scholarships, but in this context, it is applied to students of certain races since students of those races are provided with the preferential treatment.

This has always been a contentious theory. Given the recent events in Baltimore and Ferguson, the rise of the Black Lives Matter movement, and increased racial tensions in this country, such a theory is all the more heated. Interracial relations notwithstanding, it's understandable why this would be a hot-button issue. If the mismatch theory is indeed a reality, it undermines the primary argument for affirmative action in college admissions, which is that affirmative action helps disadvantaged minority groups. Politically speaking, there is a lot riding on this theory, which is why we should have cooler heads prevail in asking the question of whether the mismatch effect is mere theory or reality.

The main proponent of the mismatch effect, UCLA Professor Richard Sander, has been arguing at least since 2004 that affirmative action policies for college admissions have indeed caused this mismatch effect, most notably in his book Mismatch. Unsurprisingly, this has caused much criticism, to which Sander has replied. It wasn't just the initial criticism shortly after the release of Sander's paper. Matthew Chingos of the Urban Institute argued last Thursday that the mismatch theory doesn't withstand scrutiny, although he made that argument back in 2013 and 2009. A couple of economists found that in the field of law, removing racial preferences would actually reduce the number of African-Americans that pursue law degrees at all (Rothstein and Yoon, 2008). Other economists have followed suit in criticizing the mismatch theory (e.g., Kidder and Lempert, 2014; Kurlaender and Grodsky, 2013; Dale and Kruger, 2011; Ho, 2007).

It should also be no surprise that there are other economists aside from Sander who find evidence in favor of the mismatch theory, which is particularly true for those who pursue STEM degrees (Arcidiacono et al., 2013) and law students (Williams, 2013). Along with two other colleagues, an MIT economist found that students learn more than they do in academically heterogeneous classes (Duflo et al., 2010). Back in August, the Heritage Foundation flushed out the details of empirical evidence in a policy report that shows a mismatch effect.

The contention between affirmative action scholars and empiricists does not seem to between the outcome per se (see Brief of Empirical Scholars from 2012 case; also read Sander's 2012 amicus brief), but rather that affirmative action is a causative agent. Let's set aside for a moment that one issues here is that we could use better data in measuring the mismatch effect (Arcidiacono and Lovenheim, 2015, p. 28).

I don't like categorizing individuals into such broad racial categories. However, since society is so gung-ho to do so, let's go through some statistics. Since 1976, we have seen the percent of students enrolled for college increasingly shift to have more African-Americans and Hispanic Americans. Based on Department of Education data, African-Americans went from representing 9.6 percent of enrollees in 1976 to 14.9 percent in 2012, which is pretty good considering that African-Americans represent 13.2 percent of the population. Even in spite of an enrollment rate that is slightly higher than demographic representation in the general American population, African-Americans have a lower completion rate than Caucasian Americans by approximately 20 percent. For those select minority demographics that still make it, there is still a racial GPA gap (Arcidiacono et al., 2012).

I just outlined some unfortunate realities that point to racial gaps, particularly in terms of GPA and completion rates. Although opponents of mismatch theory like to criticize the theory, one thing I couldn't find was an alternative theory that would be more plausible. While it might be true that some minority students thrive by being around students with better academic preparedness, the aggregate data show that it's not the case for African-American students attending a four-year college. If African-American and Hispanic students are statistically more likely to receive a less-than-stellar K-12 education, it is certainly within the realm of plausibility, not to mention probability, that they would have greater difficulties handling a strenuous academic workload since the quality of K-12 education received to help them prepare for post-secondary education is in, many cases, inadequate.

There is a certain disquietude to the fact that diversity based on skin color has garnered such emphasis in college admissions, which is something I brought up when reflecting on the initial Fisher v. University of Texas case back in 2012. Balkanization and separation of people based on the color of their skin in an increasingly multicultural and ethnically diverse country only divides this country further. While the economists fight out the particulars of the data and evidence, I have to wonder about alternatives that can be pursued to mitigate certain related issues.

A few alternatives can be to improve recruitment efforts for talented lower-income students, create summer programming for these students to catch up academically, create additional tutoring opportunities for these students, decrease legacy admissions or student athletic scholarships so that these lower-income students can be admitted, or create some transparency on student data so one can compare how they match up to others' credentials. However, these alternatives are stopgaps because they don't go deep enough into the issue. If the issue is indeed lack of preparedness, then the focus should be on K-12 education so these students can handle college. Or better yet, we can make K-12 education comprehensive and informative where they can develop enough to the point where they don't need to go to college, a pursuit that can hardly be considered to have a 100 percent success rate. While I am curious as to see what education reforms are passed to help minority students, and indeed all disadvantaged students, a policy that agitates racial relations and has questionable outcomes is not something I would continue to do with an attitude of "business as usual."

Thursday, December 10, 2015

Mandated Fuel Economy and Why the Government Should Scrap CAFE Standards

I'm one for wanting to protect the environment. After all, we only have one environment. If we mess it up, the planet becomes uninhabitable. However, we cannot let feelings for wanting to preserve the environment muddle our sense of what makes for good environmental policy. If we do, all we get in return is feel-good policy that most probably worsens the quality of life. It's something to keep in mind when looking at a policy like the U.S. government's Corporate Average Fuel Rate (CAFE) standards.

Congress passed the first set of CAFE standards back in 1975, which have been regulated by the Department of Transportation (DoT) ever since. Every so often, Congress at least been mindful enough to update the standards. While there were environmental concerns about increasing fuel emissions, the primary concern in 1975 was dealing with oil shortages in light of the Arab Oil Embargo. Although we don't have to deal with an oil embargo in 2015, legally requiring certain mileage standards sounds intuitive enough. Not only do better fuel standards consume less of a scarce resource, but it also releases less harmful emissions into the atmosphere. After all, looking at the historic average fuel efficiency of light-duty vehicles, it has increased [for passenger cars] from 24.3 miles in 1980 to 36.0 in 2013 (DoT).

While one might be prone to attribute increased fuel efficiency to CAFE standards, causation is tenuous. I can just as easily attribute the increased fuel efficiency to technological development. As a matter of fact, a 2015 news release from a study conducted by the National Academies of Sciences shows that most of the reduction in fuel consumption from 2015 to 2025 will not come from CAFE standards, but from technological improvements in gasoline internal combustion engines. An economist from MIT actually found that if CAFE standards did not exist, fuel economy would have increased by 60 percent [instead of the 15 percent that by which it increased] (Knittel, 2011). More to the point, these standards introduce penalties in order to incentivize more fuel efficient cars. This doesn't act as a direct subsidy, but indirectly attempts to affect producer behavior with some negative reinforcement. Does said negative reinforcement have the desirable effects?

The Heritage Foundation recently released a policy analysis on how bad policies increase the cost of living for the American people. One of those policies was the implementation of CAFE standards. According to Heritage Foundation calculations, CAFE standards cost the American people $55 billion per annum, which comes out to $448 per household.

This research also found that prior to Obama's 2009 mandate to add an extra nine miles to fuel efficiency over a five-year period, automobile prices were decreasing. Only after Obama's mandate did automobile prices start to rise again. If government mandates expect that rapid growth in such a short time, especially considering that it takes time to develop, test, and incorporate such changes, it's no surprise automobile prices shot up once the mandate took effect. Fuel economy standards will continue to add costs. According to a report from the Center for Automotive Research (CAR, p. 27), the 2017-2025 CAFE standards will add anywhere from $3,734-9,790 on the sticker price of the car, depending on automobile type. Another factor to consider is that enough automobile manufacturers would rather pay CAFE fines, which also begs the question of how much CAFE standards affect fuel economy.

Price increases affect the consumer with what is called price elasticity of demand. Essentially, price elasticity is the sensitivity to which a consumer responds to price changes [in form of a ratio to change of price to change in quantity]. The greater the shock is to the consumer, the higher the price elasticity of demand. Automobiles are more likely to generate a higher price elasticity of demand because a 10 percent change in an expensive purchase is going to be more impactful than a 10 percent price change in bananas. Raising the price on an elastic good either means not purchasing a new automobile or delaying the purchase by driving around a clunker. A study from the National Bureau of Economic Research (Jacobsen and van Benthem, 2013) not only confirms that CAFE standards caused enough of a price increase to postpone scrapping their old cars, but that 13 to 23 percent of the expected gains from fuel efficiency are lost because of the used vehicle market.

There is more than the sticker price of the automobile to consider. CAFE standards could very well cost lives. How so? In order to meet fuel economy standards, the most efficient way for automobile OEMs to do so is to make the vehicles lighter (e.g., converting steel to aluminum). Weight disparities create greater risk for those driving lighter cars. For instance, the National Academics Press found that making automobiles killed up to 2,600 additional individuals in 1993, and the Cascade Policy found that CAFE standards took up to 4,500 lives in 1997.

Even if you want to argue that there are net benefits to CAFE standards (e.g., Council on Foreign Relations, ConsumersUnion), I would still argue that better policies could be implemented, especially since consumers are more likely to put emphasis on the upfront costs of increased cars versus the longer-term fuel efficiency savings (Sallee et al., 2015). We can talk about feebates, but I would put more emphasis on the fuel tax, specifically on reforming the fuel tax to be more efficient. In comparison to other policy alternatives, fuel economy standards are six to fourteen times more expensive than a consumption-reducing tax (Karplus et al., 2013). But simply saying "let's increase the fuel tax" might not cut it. As I have brought up in the past, we should replace the fuel tax with a mileage-based user fee. A mileage-based user fee would be an improvement over the status quo, thereby removing the magnitude of the issues behind CAFE standards while accomplishing its primary goals.

3-4-2016 Addendum: The Heritage Foundation just realized a policy brief showing how, among other things, CAFE standards increase the sticker price of an automobile by $3,400 while only reducing the global temperature by a couple-hundredths of a degree Celsius at best.

Congress passed the first set of CAFE standards back in 1975, which have been regulated by the Department of Transportation (DoT) ever since. Every so often, Congress at least been mindful enough to update the standards. While there were environmental concerns about increasing fuel emissions, the primary concern in 1975 was dealing with oil shortages in light of the Arab Oil Embargo. Although we don't have to deal with an oil embargo in 2015, legally requiring certain mileage standards sounds intuitive enough. Not only do better fuel standards consume less of a scarce resource, but it also releases less harmful emissions into the atmosphere. After all, looking at the historic average fuel efficiency of light-duty vehicles, it has increased [for passenger cars] from 24.3 miles in 1980 to 36.0 in 2013 (DoT).

While one might be prone to attribute increased fuel efficiency to CAFE standards, causation is tenuous. I can just as easily attribute the increased fuel efficiency to technological development. As a matter of fact, a 2015 news release from a study conducted by the National Academies of Sciences shows that most of the reduction in fuel consumption from 2015 to 2025 will not come from CAFE standards, but from technological improvements in gasoline internal combustion engines. An economist from MIT actually found that if CAFE standards did not exist, fuel economy would have increased by 60 percent [instead of the 15 percent that by which it increased] (Knittel, 2011). More to the point, these standards introduce penalties in order to incentivize more fuel efficient cars. This doesn't act as a direct subsidy, but indirectly attempts to affect producer behavior with some negative reinforcement. Does said negative reinforcement have the desirable effects?

The Heritage Foundation recently released a policy analysis on how bad policies increase the cost of living for the American people. One of those policies was the implementation of CAFE standards. According to Heritage Foundation calculations, CAFE standards cost the American people $55 billion per annum, which comes out to $448 per household.

This research also found that prior to Obama's 2009 mandate to add an extra nine miles to fuel efficiency over a five-year period, automobile prices were decreasing. Only after Obama's mandate did automobile prices start to rise again. If government mandates expect that rapid growth in such a short time, especially considering that it takes time to develop, test, and incorporate such changes, it's no surprise automobile prices shot up once the mandate took effect. Fuel economy standards will continue to add costs. According to a report from the Center for Automotive Research (CAR, p. 27), the 2017-2025 CAFE standards will add anywhere from $3,734-9,790 on the sticker price of the car, depending on automobile type. Another factor to consider is that enough automobile manufacturers would rather pay CAFE fines, which also begs the question of how much CAFE standards affect fuel economy.

Price increases affect the consumer with what is called price elasticity of demand. Essentially, price elasticity is the sensitivity to which a consumer responds to price changes [in form of a ratio to change of price to change in quantity]. The greater the shock is to the consumer, the higher the price elasticity of demand. Automobiles are more likely to generate a higher price elasticity of demand because a 10 percent change in an expensive purchase is going to be more impactful than a 10 percent price change in bananas. Raising the price on an elastic good either means not purchasing a new automobile or delaying the purchase by driving around a clunker. A study from the National Bureau of Economic Research (Jacobsen and van Benthem, 2013) not only confirms that CAFE standards caused enough of a price increase to postpone scrapping their old cars, but that 13 to 23 percent of the expected gains from fuel efficiency are lost because of the used vehicle market.

There is more than the sticker price of the automobile to consider. CAFE standards could very well cost lives. How so? In order to meet fuel economy standards, the most efficient way for automobile OEMs to do so is to make the vehicles lighter (e.g., converting steel to aluminum). Weight disparities create greater risk for those driving lighter cars. For instance, the National Academics Press found that making automobiles killed up to 2,600 additional individuals in 1993, and the Cascade Policy found that CAFE standards took up to 4,500 lives in 1997.

Even if you want to argue that there are net benefits to CAFE standards (e.g., Council on Foreign Relations, ConsumersUnion), I would still argue that better policies could be implemented, especially since consumers are more likely to put emphasis on the upfront costs of increased cars versus the longer-term fuel efficiency savings (Sallee et al., 2015). We can talk about feebates, but I would put more emphasis on the fuel tax, specifically on reforming the fuel tax to be more efficient. In comparison to other policy alternatives, fuel economy standards are six to fourteen times more expensive than a consumption-reducing tax (Karplus et al., 2013). But simply saying "let's increase the fuel tax" might not cut it. As I have brought up in the past, we should replace the fuel tax with a mileage-based user fee. A mileage-based user fee would be an improvement over the status quo, thereby removing the magnitude of the issues behind CAFE standards while accomplishing its primary goals.

3-4-2016 Addendum: The Heritage Foundation just realized a policy brief showing how, among other things, CAFE standards increase the sticker price of an automobile by $3,400 while only reducing the global temperature by a couple-hundredths of a degree Celsius at best.

Monday, December 7, 2015

Why Jews Publicize the Miracle of Chanukah

Kislev 25 (this year, it falls on the sunset of December, 6 on the Gregorian calendar) marks the beginning of Chanukah, which is the Jewish Festival of Lights. The primary mitzvah for this holiday is to light the menorah. The aspect of lighting the menorah that I would like to focus on this year is where one is supposed to light the menorah. According to the Talmud (Shabbat 21b), the Chanukah candle is supposed to be placed at the door of the house. If one lives on an upper floor of a building, it is to be placed by a window. The only exception to this rule is if one lives in dangerous times where such an act would endanger one's life (also see Mishneh Torah, Megillah v'Channukah, Ch. 4). The Talmudic sages (חז״ל) go out of their way to make the point that the mitzvah should be public. We aren't commanded to publicize the mitzvah of lighting Shabbat candles, so why are we given the rabbinic commandment to publicize the lighting of Chanukah candles?

One answer is that it is not enough for the Jew to be Jewish "in private only." The word for "[Jewish] education" is חינוך, which happens to have the same three-letter root as the word Chanukah. It is not enough to keep the light of Torah inside one's home. A Jew is meant to have Jewish values permeate in all facets of life, and thusly bring that light outside of the private domain and into the public domain. That idea leads to another thought.

The Talmud later states in Shabbat 22b that the purpose for the menorah is that it is a testimony to mankind that the Divine Presence rests upon the people Israel. Since the only facet of the Temple service that embodies this testimony is the menorah, it is supposed to represent the role of the Jewish people in the Divine scheme of creation. The whole notion of being a "chosen people" can make some anxious, annoyed, or miffed, but as I have explored before, the whole idea of the Jews being a chosen people is in terms of spiritual vocation. Jews weren't chosen because they're better, but because G-d wanted the Jewish people to take on more responsibilities. That is why there are certain commandments (mitzvot) that are incumbent upon the Jewish people, but from which non-Jews are exempt. According to Jewish theology, these additional responsibilities help create a unique spiritual role for the Jew to positively influence the world in its own way (e.g., playing a pivotal role into the modern system of ethics). As the Jewish aphorism goes, Schwer zu zein ein Yid. "It's tough to be a Jew." Isaiah 42:6 refers to the Jewish people as or l'goyim (אור לגויים), a light unto nations, and that idea could not be more appropriate than during a Festival of Lights.

Chanukah typically falls in December, which from an astronomical standpoint, is the darkest period of the year for those in the Northern Hemisphere (and yes, that includes Israel). We use this period of pronounced physical darkness to remind us of the spiritual darkness in this world. As Einstein once said, "Darkness is the absence of light." Even just a little bit of light removes us from abject darkness.

In Judaism, ritual serves as an action-based meditation. While Judaism has a universalistic set of morals and values, it also comes with particularistic rituals. Without these rituals, there would be no discernible distinction between the Jew and the non-Jew. Bringing it back to the theme of darkness, we all have our moments of darkness. We need to bring light into our own lives. Interesting how Jewish tradition historically emphasizes the holiness of light, as opposed to the destructiveness of fire. However, the Jew is not supposed to create that light just for the individual self, the household, or even just the Jewish people. It is meant to be shared with everyone within the sphere of influence to both literally and metaphorically bask in the light. A vital part of the aforementioned Jewish spiritual vocation is to help others, regardless of whether they are Jewish or not, and make their lives brighter than they were beforehand. Even though there is plenty of darkness, cruelty, and evil in the world, the publicizing of the menorah reminds us there is hope because the Maccabees were able to overcome oppression and experience the power rededication, so can we. It reminds us that even a little bit of light can take the edge off the darkness. May we experience usher in an era where such darkness doesn't exist, and we can know the true meaning of peace!

One answer is that it is not enough for the Jew to be Jewish "in private only." The word for "[Jewish] education" is חינוך, which happens to have the same three-letter root as the word Chanukah. It is not enough to keep the light of Torah inside one's home. A Jew is meant to have Jewish values permeate in all facets of life, and thusly bring that light outside of the private domain and into the public domain. That idea leads to another thought.

The Talmud later states in Shabbat 22b that the purpose for the menorah is that it is a testimony to mankind that the Divine Presence rests upon the people Israel. Since the only facet of the Temple service that embodies this testimony is the menorah, it is supposed to represent the role of the Jewish people in the Divine scheme of creation. The whole notion of being a "chosen people" can make some anxious, annoyed, or miffed, but as I have explored before, the whole idea of the Jews being a chosen people is in terms of spiritual vocation. Jews weren't chosen because they're better, but because G-d wanted the Jewish people to take on more responsibilities. That is why there are certain commandments (mitzvot) that are incumbent upon the Jewish people, but from which non-Jews are exempt. According to Jewish theology, these additional responsibilities help create a unique spiritual role for the Jew to positively influence the world in its own way (e.g., playing a pivotal role into the modern system of ethics). As the Jewish aphorism goes, Schwer zu zein ein Yid. "It's tough to be a Jew." Isaiah 42:6 refers to the Jewish people as or l'goyim (אור לגויים), a light unto nations, and that idea could not be more appropriate than during a Festival of Lights.

Chanukah typically falls in December, which from an astronomical standpoint, is the darkest period of the year for those in the Northern Hemisphere (and yes, that includes Israel). We use this period of pronounced physical darkness to remind us of the spiritual darkness in this world. As Einstein once said, "Darkness is the absence of light." Even just a little bit of light removes us from abject darkness.

In Judaism, ritual serves as an action-based meditation. While Judaism has a universalistic set of morals and values, it also comes with particularistic rituals. Without these rituals, there would be no discernible distinction between the Jew and the non-Jew. Bringing it back to the theme of darkness, we all have our moments of darkness. We need to bring light into our own lives. Interesting how Jewish tradition historically emphasizes the holiness of light, as opposed to the destructiveness of fire. However, the Jew is not supposed to create that light just for the individual self, the household, or even just the Jewish people. It is meant to be shared with everyone within the sphere of influence to both literally and metaphorically bask in the light. A vital part of the aforementioned Jewish spiritual vocation is to help others, regardless of whether they are Jewish or not, and make their lives brighter than they were beforehand. Even though there is plenty of darkness, cruelty, and evil in the world, the publicizing of the menorah reminds us there is hope because the Maccabees were able to overcome oppression and experience the power rededication, so can we. It reminds us that even a little bit of light can take the edge off the darkness. May we experience usher in an era where such darkness doesn't exist, and we can know the true meaning of peace!

Tuesday, November 24, 2015

Why It Doesn't Make Sense to Block Syrian Refugess from the U.S.

Humanitarian crises perpetrated by scourges of the earth are an

unfortunate, bleak reality. The lack of freedom and liberties uproot

people from their homes and make it all the more difficult to pursue

life, liberty, and happiness. The latest humanitarian crisis that has

been receiving considerable attention from the media is the Syrian

crisis. Since March 2011, Syria has experienced a sectarian civil war.

While the inhumanity behind the death tool is regrettably not anything

new, Syrians citizens fleeing the Syrian nation in droves is a more

recent development. The European Commission is calling the Syrian civil war the worst humanitarian crisis since WWII. As of date, the crisis has created about 4.3 million refugees.

Europe has dealt with its fair share of headache of trying to admit

Syrian refugees, and now it looks like it's America's turn. In light of

the recent ISIS attack in France, the United States has become much more

hesitant in admitting Syrian refugees. A majority of governors said they would refuse to admit Syrian refugees. Forgetting that such immigration issues are under the purview of the federal government

for a moment, it makes me question whether we should let in Syrian

refugees or institute a moratorium in the hopes we can prevent an attack

like the one in France.

As has been implied, the major concern about letting in Syrian refugees is a national security concern. For critics of admitting refugees, particularly many of the Republican presidential candidates, they are worried that ISIS soldiers will pose as refugees and work their way into the country to launch a terrorist attack. 13 percent of Syrian refugees are sympathetic towards ISIS, which can be perceived as reasonable cause for this concern. Much like with hyperbolic claims made about mass shootings in this country, we have to make sure that we properly assess the data to make sure we understand the risk.

I have to ask what logical sense it would be for a terrorist from ISIS [or any other agency] to sneak in as a refugee. It takes one to two years for refugees to get processed before they ever step foot on American soil. And none of that counts the two-year vetting process from the UN High Commissioner from Refugees prior to being referred to the United States. The security check for a refugee is the most stringent type for anyone entering the United States, which is in contrast to Europe's more lax refugee process. It would be much easier for a potential ISIS terrorist to enter this country with a student visa, a business visa, a travel visa, or even by crossing the U.S.-Mexican border.

The U.S. only accepted 2,148 Syrian refugees, or 0.05 percent of all Syrian refugees (the vast majority of whom are women and children), since the crisis began. Obama is looking to admit 10,000 Syrian refugees this upcoming year. Since 9-11, there have been 785,000 refugees admitted to the United States. Out of that number, only 12 refugees have been removed or arrested due to terrorism charges. Based on post 9-11 refugee data, the probability that a refugee would be involved with a terrorist organization is .001%. More to the point, none of the refugees have made a terrorist attack on American soil. The probability of even just one ISIS member attacking through the manipulation of the refugee system is so small that it is hardly worth halting the refugee program. A defense policy specialist from the Rand Corporation pointed out that in most cases, "a would-be terrorist's refugee status had little or nothing to do with their radicalization and shift to terrorism." Shutting out thousands of refugees who legitimately need help in the minutest of chances that you might stop one terrorist is nonsensical.

As limited research shows (e.g., Findley et al., 2013; Choi and Saleyhan, 2013; Ekey, 2008), having refugees stay in refugee camps instead of finding them countries where they can live only exacerbates the issue. Europe will have to deal with this issue differently since the conditions across the Atlantic have encouraged greater Islamic radicalization, but at least for the United States, there isn't a good reason to discontinue admitting Syrian refugees. Other countries, such as Jordan, Turkey (also see here), and Lebanon, are much smaller countries that have admitted way more refugees than the United States, and in spite of some short-term shock, their economies have been adjusting (although keep in mind that given the high refugee-to-general population ratio in Lebanon, it has put certain unique strains on Lebanon that would not be experienced in the United States).

There is no reason to believe that refugees would not be of economic benefit in the United States. The "we don't have enough jobs for the refugees" is another variation of the argument that anti-immigration pundits use that is based on the fallacy that the economy is a fixed pie. Immigrants are not a fiscal drain. If anything, immigration has been a net gain for the United States economy, and the same thing should happen when the U.S. government admits more Syrian refugees. Even with short-term adjustments to the labor market, the long-term economic benefits outweigh the upfront costs.

Ever since its debacle with not taking in Jewish refugees back in 1939, the United States has taken it as more of a moral imperative to act as a exemplar and actually take in refugees instead of give into isolationist tendencies. 3.75 million refugees admitted since 1975 (not to mention all the other ones from earlier), and the American economy hasn't imploded because it took in too many refugees. Barring a legitimate national security, crime, or health concern, there is no reason we shouldn't help by admitting some more Syrian refugees.

As has been implied, the major concern about letting in Syrian refugees is a national security concern. For critics of admitting refugees, particularly many of the Republican presidential candidates, they are worried that ISIS soldiers will pose as refugees and work their way into the country to launch a terrorist attack. 13 percent of Syrian refugees are sympathetic towards ISIS, which can be perceived as reasonable cause for this concern. Much like with hyperbolic claims made about mass shootings in this country, we have to make sure that we properly assess the data to make sure we understand the risk.

I have to ask what logical sense it would be for a terrorist from ISIS [or any other agency] to sneak in as a refugee. It takes one to two years for refugees to get processed before they ever step foot on American soil. And none of that counts the two-year vetting process from the UN High Commissioner from Refugees prior to being referred to the United States. The security check for a refugee is the most stringent type for anyone entering the United States, which is in contrast to Europe's more lax refugee process. It would be much easier for a potential ISIS terrorist to enter this country with a student visa, a business visa, a travel visa, or even by crossing the U.S.-Mexican border.

The U.S. only accepted 2,148 Syrian refugees, or 0.05 percent of all Syrian refugees (the vast majority of whom are women and children), since the crisis began. Obama is looking to admit 10,000 Syrian refugees this upcoming year. Since 9-11, there have been 785,000 refugees admitted to the United States. Out of that number, only 12 refugees have been removed or arrested due to terrorism charges. Based on post 9-11 refugee data, the probability that a refugee would be involved with a terrorist organization is .001%. More to the point, none of the refugees have made a terrorist attack on American soil. The probability of even just one ISIS member attacking through the manipulation of the refugee system is so small that it is hardly worth halting the refugee program. A defense policy specialist from the Rand Corporation pointed out that in most cases, "a would-be terrorist's refugee status had little or nothing to do with their radicalization and shift to terrorism." Shutting out thousands of refugees who legitimately need help in the minutest of chances that you might stop one terrorist is nonsensical.

As limited research shows (e.g., Findley et al., 2013; Choi and Saleyhan, 2013; Ekey, 2008), having refugees stay in refugee camps instead of finding them countries where they can live only exacerbates the issue. Europe will have to deal with this issue differently since the conditions across the Atlantic have encouraged greater Islamic radicalization, but at least for the United States, there isn't a good reason to discontinue admitting Syrian refugees. Other countries, such as Jordan, Turkey (also see here), and Lebanon, are much smaller countries that have admitted way more refugees than the United States, and in spite of some short-term shock, their economies have been adjusting (although keep in mind that given the high refugee-to-general population ratio in Lebanon, it has put certain unique strains on Lebanon that would not be experienced in the United States).

There is no reason to believe that refugees would not be of economic benefit in the United States. The "we don't have enough jobs for the refugees" is another variation of the argument that anti-immigration pundits use that is based on the fallacy that the economy is a fixed pie. Immigrants are not a fiscal drain. If anything, immigration has been a net gain for the United States economy, and the same thing should happen when the U.S. government admits more Syrian refugees. Even with short-term adjustments to the labor market, the long-term economic benefits outweigh the upfront costs.

Ever since its debacle with not taking in Jewish refugees back in 1939, the United States has taken it as more of a moral imperative to act as a exemplar and actually take in refugees instead of give into isolationist tendencies. 3.75 million refugees admitted since 1975 (not to mention all the other ones from earlier), and the American economy hasn't imploded because it took in too many refugees. Barring a legitimate national security, crime, or health concern, there is no reason we shouldn't help by admitting some more Syrian refugees.

Tuesday, November 17, 2015

Consumer Financial Protection Bureau: An Agency for the American Consumer?

The Great Recession, which has been the worst financial crisis since the Great Depression, sparked major financial regulation in the form of Dodd-Frank. Part of this 2,300-page legislation included Title X, which established the Consumer Financial Protection Bureau. The CFPB is an entity within the Board of Governors of the Federal Reserve System that is supposed to regulate the "offering and provision of consumer financial products and services under federal consumer financial laws." Republican presidential candidate Carly Fiorina took a jab at the CFPB saying that the CFPB has "no congressional oversight." Politifact rated this claim half-true since the CFPB technically has some congressional oversight, even if it is significantly lower than other agencies. Congressional oversight notwithstanding, what I would like to know is if the CFPB has succeeded in its mission statement of "empowering consumers to take more control over their economic lives." These subsequent points summarize the research I was able to find:

As the Federalist Society points out, we should not "impose 19th century regulatory approaches to a 21st century credit consumer economy." Having an agency with a director who has de facto power to deem any consumer product or practice "unfair" or "abusive" is perturbing indeed. Back in its 2013 report on the CFPB, the Heritage Foundation made some suggestions for reform, including striking the undefined term of "abusive" from the CFPB's purview, prohibiting public release of unconfirmed complaint data, abolishing the deference in judicial review granted to the CFPB, and downright abolishment of the CFPB. At the end of the day, we should make it easier for the American people to have access to credit, not more difficult. While the passage of time will better tell us whether the CFPB helps the American consumer, it seems that there is enough out there to make us doubt whether CFPB regulations have done more good than harm.

If you want more information on the CFPB, you can go to the CFPB's website, read this Congressional Research Service brief, or this financial audit from the Government Accountability Office that was released earlier this week.

- The Mercatus Center released a working paper in September about unintended consequences from the CFPB, stating that "although stricter regulation of permissible debt collection practices can benefit consumers who are in default and increase demand for credit by consumers, overly restrictive regulation will result in higher interest rates and less access to credit for consumers...it may also have the unintended consequence of providing incentives for creditors to more rapidly escalate their efforts to more aggressive collection practices, including litigation."

- The Cato Institute published a research brief in February showing how the regulations with Dodd-Frank, and more specifically the CFPB, have disproportionately burdened smaller banks with compliance costs.

- The Mercatus Center also released a study back in August showing [with CFPB data] that consumer arbitration settlements are preferable to heavy-handed regulation from the CFPB.

- To be fair and bring in other points of view, the Left-leaning Center for American Progress makes the argument in its issue brief that it has protected consumers in the mortgage market.

- The Right-leaning Heritage Foundation put out an issue brief earlier this month showing how payday lenders provide a vital service to the financial sector, as well as how regulating these lenders compounds issues burdens in the financial sector.

- The CFPB has created a "Qualified Mortgage" category that has imposed strict home financing standards for lenders and borrowers alike.

- Checking accounts have become more expensive for lower-income family households, which is why these households have gravitated towards prepaid cards. As the American Action Forum illustrates, recently proposed CFPB regulations would most probably eliminate prepaid cards as an option, thereby leaving more unbanked Americans.